By Charles Chen.Jul 4, 2022

In Part 1 of this series, I talked about why AWS is probably not the best choice for SaaS startups who do not already have deep AWS expertise on the team and why I personally think that Google Cloud and Microsoft Azure are better suited for startups that lack deep technical experience and want to iterate rapidly.

Don’t get me wrong: for startups with teams already well-versed in AWS, then AWS is the way to go. But what I have learned through my own journey is that one of the biggest challenges for startups is minimizing the complexity which can hamper a team’s agility and speed and that complexity tends to be higher in AWS.

In this second part, I’d like to explore where I think each of the platforms really shine and why an early stage startup should consider one platform over the other:

- AWS — Ecosystem, Access to Developers, Broader Community Support, and Standout Tech

- Azure — Functions, Static Web Apps, Tooling Integration, and Best Free Tier

- GCP — Pub/Sub/Cloud Tasks/Cloud Scheduler, Containers, and Kubernetes

Unfortunately, as broad as these platforms are these days, I cannot comment on services that I haven’t had hands-on experience with. While in some cases, the services are only marginally different. For example, all three platforms have image recognition as a service, in my usage, none really stood out as exceptionally better. If my insight seems unusually reductive, that’s because it is focused on building the core of SaaS applications and APIs where I have personally worked across all three clouds and where the platform really stands out.

If you have experience working with other capabilities across these clouds (e.g. ML, big data), please leave a comment!

AWS

From the first part of this series, you might think that I wouldn’t recommend AWS at all; however, this is not the case.

There are in fact several ways in which AWS shines that are unmatched by GCP and Azure.

Ecosystem

One of the strongest cases for AWS is that it has the biggest third party ecosystem by virtue of also having the largest marketshare. A really great example is LocalStack which provides a nearly fully functional clone of AWS that you can run locally. This makes some activities like CI/CD and the local development experience simply unmatched on either GCP or Azure.

In contrast, Azure CosmosDB still lacks a local emulator that can run on Apple M1 hardware. While Microsoft has tools like Azurite, that emulate parts of the stack, it’s really no match for LocalStack — something that can only exist where there’s a large enough customer base to support such a tool.

AWS first party tooling for local emulation is generally more complete as well. Being able to run the DynamoDB emulator in CI/CD and running full integration tests is a huge win.

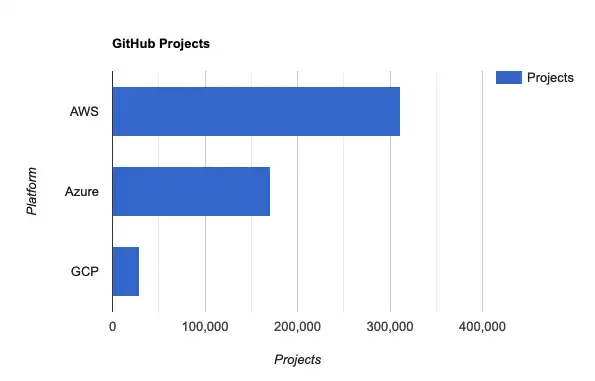

Searching GitHub for AWS, Azure, and GCP yields the following:

The sheer number of projects — especially 3rd party commercial and open source projects — for AWS is a byproduct of its first-mover advantage and dominance in the market.

Access to Developers

Because AWS has such a large marketshare, teams are also more likely to be able to find developers with AWS experience versus Azure or GCP experience as a function of the maturity of AWS compared to the latter.

For startups, this means that picking AWS can be a big boon when it comes time to scale the team by having access to a larger pool of experienced engineers to pick from.

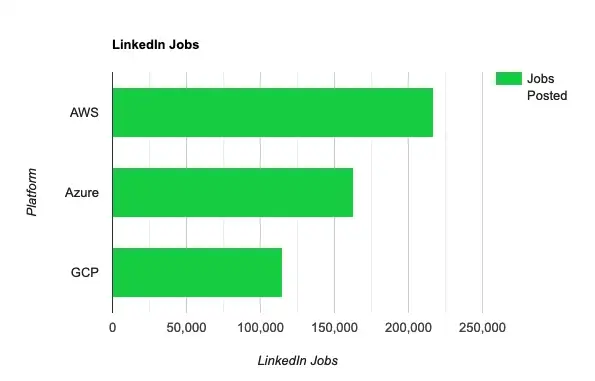

Searching on LinkedIn jobs for AWS, Azure, and GCP yields the following results:

It’s clear that there’s more demand and intuitively more engineers with AWS experience.

Broader Community Support

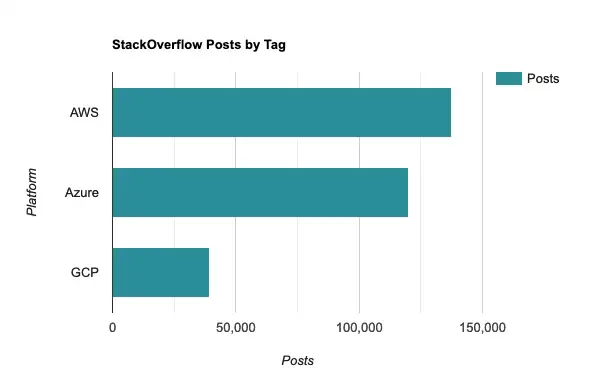

As a consequence, you’ll also find broader community support. On StackOverflow, searching for the tags [aws], [azure], and [gcp] yields the following results:

This is hardly a scientific exploration, but it clearly reflects the smaller community of GCP developers compared to AWS, even if GCP has some really great features that help startups move fast.

Standout Tech

It’s not just the ecosystem and marketshare, AWS also has a few tech gems as part of that philosophy of having a solution for every niche.

For example, Lambda — despite its lack of higher level abstractions like Azure Functions — has the lowest cold start times of the three serverless function runtimes. For use cases sensitive to cold starts, Lambda is the best solution which offers the most performance. If you’re open to C# and .NET, AWS .NET Annotation Lambda Framework takes a small slice of the DX of Azure Functions and brings it to Lambda.

AWS is the only one of the big three with a managed GraphQL service with AppSync and it’s hard not to be intrigued by the potential of building some really gnarly stuff on it. AppSync allows a team to effectively create a serverless, API-less GraphQL endpoint that can abstract backend access to anything by using HTTP resolvers (though you have to get comfortable with the clunky Apache Velocity Templating Language to get the most out of it). It can even directly access DynamoDB, S3, and Lambda without having to write any API layer at all! AppSync is an underrated gem for teams building serverless apps, but its complexity cliff is quite high. It almost feels like a science experiment — someone’s crazy awesome idea that actually made it to production!

For teams with use cases that map to graph databases, AWS Neptune’s support of openCypher makes it a standout compared to Azure CosmosDB’s Gremlin only graph query support. If you haven’t worked with Cypher before, it is one of the most enjoyable and intuitive query languages I’ve ever used. (Check out Neo4j Aura if you’d like to run graph databases on GCP — I have a soft spot for graph databases!)

Azure

If you’re already comfortable with C# or are willing to make the small transition from TypeScript to C#, Azure offers perhaps one of the best environments for startups because of the the extremely low “connective complexity” when using Azure Functions and Static Web Apps.

While Azure supports many languages and runtimes, some of the best features only have full support in C#; you can get many of the same benefits whether you’re using Java, JavaScript, or Python; it simply won’t be quite as streamlined as it is when using C# and Visual Studio.

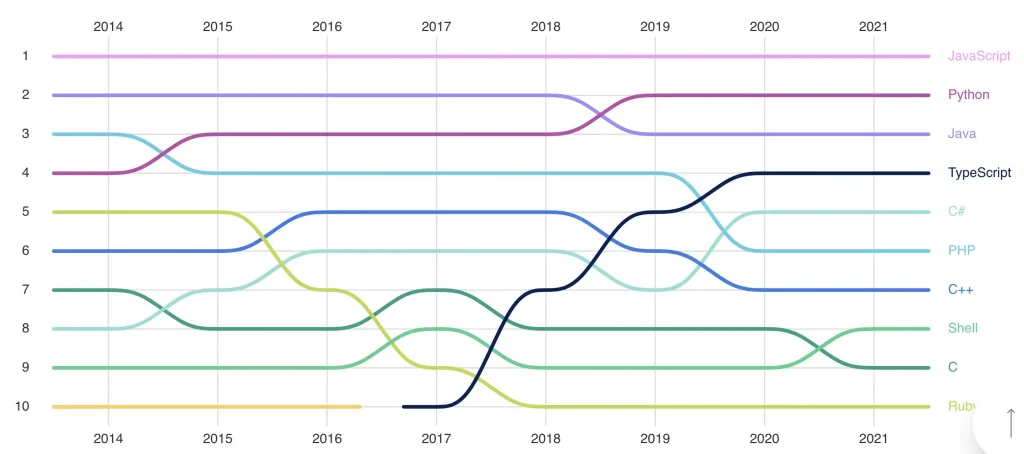

Is it worth adopting C#? According to devjobsscanner.com, C# is the 4th most demanded programming language. GitHub’s State of the Octoverse 2021 show’s C#having a resurgence over the last few years:

For the curious, I have a small repo which shows how similar JavaScript, TypeScript, and C# have become as they’ve converged over the years and I think it’s a small lift for teams that are already comfortable with TypeScript: https://github.com/CharlieDigital/js-ts-csharp

Functions

Azure Functions is one of the true stars of any of the cloud platforms and the reason is because of how easy it makes it to connect parts of cloud infrastructure together with minimal glue or IaC work required.

The biggest delta between Azure Functions and AWS Lambda or Google Cloud Functions is that it is operating at a much higher level of abstraction. The interface to Lambda and Google Cloud Functions are events while Azure Functions uses bindings to abstract the event input and output.

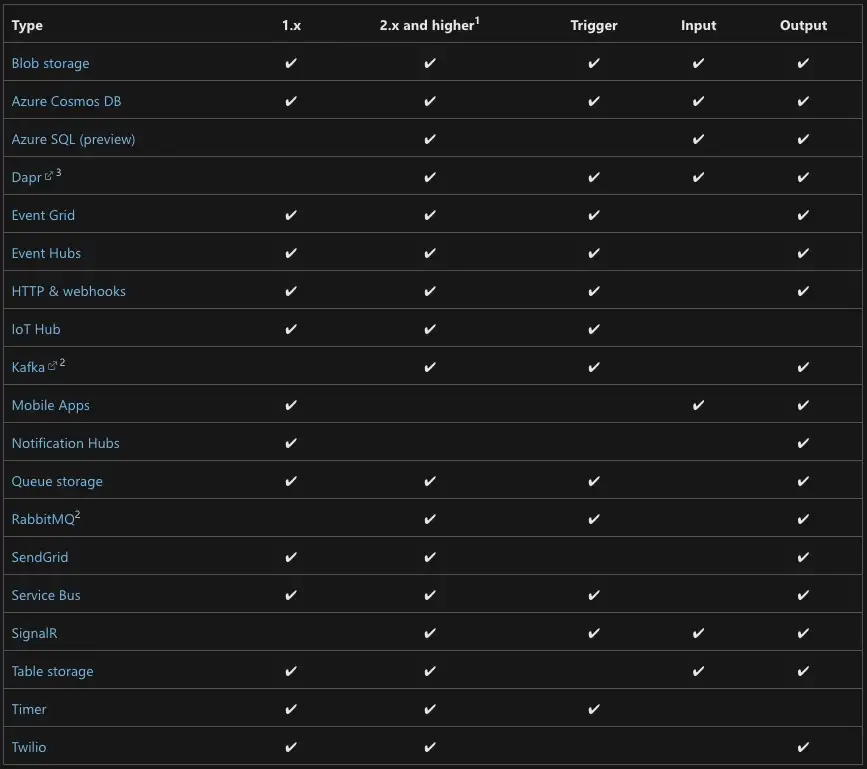

The built-in bindings are what make Functions so powerful:

What this means is that connecting I/O in Functions is unusually low in ceremony and complexity as the bindings take the place of managing client connections and external infrastructure. Simply stack together how you want to connect your pieces using attributes and you’re done!

A simple example is the Timer binding. On GCP, the equivalent is to use Cloud Scheduler or Cloud Tasks with a scheduled task. On AWS, this would require Event Bridge with Lambda. With the Timer binding, simply decorating a serverless function with the Timer trigger attribute schedules it.

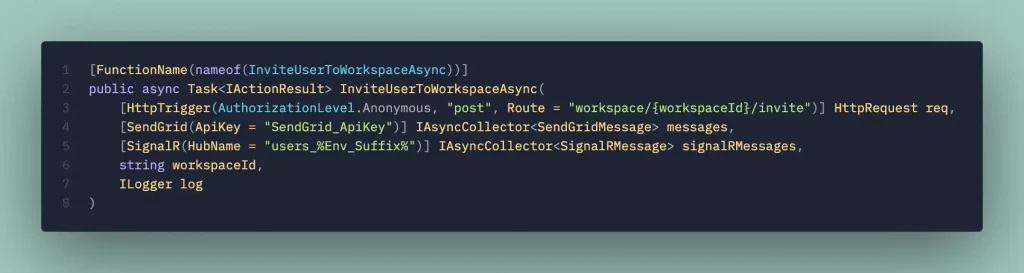

As another example, this method below connects an HTTP input trigger to both a SendGrid output message collection to a real-time SignalR websocket channel:

To forward a message to SendGrid, simply add it to the messages collection that is provided with the binding above:

Note what I didn’t need to do: instantiate clients or understand how to use the SendGrid API; I just construct a message and hand it off to the binding.

It is absolutely incredible how productive a team can be in Azure Functions because of this. There’s no second thought on building complex data flows and intricate interactions because it’s as simple as snapping endpoints together. This approach also minimizes the complexity of the IaC needed to deploy in Azure as much of that is handled through Function bindings.

Azure Durable Functions — long running orchestrations — also have built-in webhook event receivers. This allows building long-running, complex serverless orchestrations that can interact with external systems with minimal needed to set up additional infrastructure (for example, until Lambda gained Function URLs this spring, it was necessary to stand up API Gateway or an Application Load Balancer to expose those endpoints).

It isn’t without fault as the cold starts can be brutal relative to Lambda, but if you are clever with how you manage keeping warm instances around or opt for one of the tiers with warm instances, it offers the best of both worlds.

Static Web Apps

Modern SPAs have a fairly common deployment pattern and Azure’s Static Web App streamlines this by offering simple connectivity between your static front-end and API backend.

While it is also possible to deploy your static React, Vue, or Svelte assets and serve them from S3 or Google Cloud Storage buckets, Azure Static Web Apps cuts some of the extra steps required to map S3 or GCS buckets to public facing apps by including SSL, custom domains, authz/authn, GitHub integration, and more in one package. Once again, Azure provides a higher level abstraction over the infrastructure pieces required to build and deploy a common web application deployment pattern.

Want to deploy a Next.js app from GitHub to Azure Static Web Apps — for free? Microsoft’s got you.

It is by far one of the easiest ways to deploy and operate a modern SPA.

Tooling Integration

Microsoft is the only one of the three that ships its own IDE and not just one, but two excellent IDEs: Visual Studio and Visual Studio Code.

If you’re comfortable with C# and .NET, Visual Studio’s deep integration with Azure provides an amazingly streamlined development experience from initializing projects to delivering them to the cloud to monitoring and debugging.

Even VS Code with the bevy of first party extensions from Microsoft make working with Azure from the IDE extremely fluid.

Microsoft’s ownership of Azure DevOps and GitHub also means that many of the CI/CD integrations — especially from DevOps — are very streamlined and low in complexity. AWS CodeBuild and CodeDeploy, for example, pale in comparison and simply aren’t worth using.

BEST Free Tier

Of the three clouds, I have to commend Microsoft for offering the best free tier by a long mile.

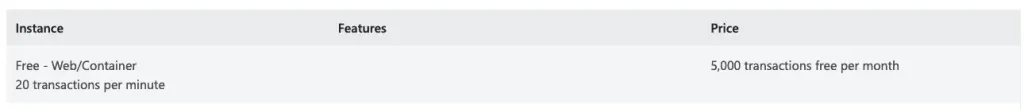

Azure Cognitive Q&A Maker, for example, offers a perpetually free tier that is limited only by scale:

Want to experiment with computer vision? Free:

Never worry about being billed because some service wasn’t decommissioned!

Azure CosmosDB similarly provides a free tier which only limits you by throughput and scale.

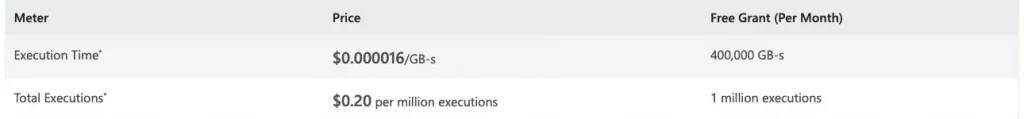

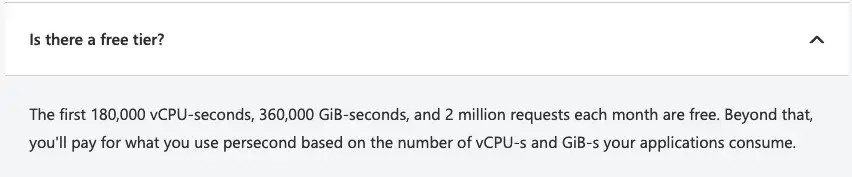

Azure Functions provides an extremely generous monthly free grant:

How about Azure Container Apps?

Overall, I find that Azure provides the most generous free tier that is very explicitly free and also makes it unlikely to end up with surprise bills when using the free services compared to AWS. This is a big boon for startups as teams can easily deploy live versions using the free tiers during the early days.

GCP

The appeal of GCP for startups — aside from the really good documentation and financial benefits —is that with a small number of really easy to use services, teams can simplify architecture and reduce complexity while perhaps being a bit more accessible than Azure Functions.

The combination of these services simplifies how APIs are built and makes it easy for less experienced teams to build otherwise complex models of compute and deployment.

Containers and Kubernetes

While I would recommend that every startup avoid Kubernetes if at all possible, if the use case doesn’t fit well into serverless containers, GCP’s Google Kubernetes Engine (GKE) is the easiest to use based on my experience.

GKE’s Autopilot is the easiest way to take advantage of Kubernetes with the caveat that it doesn’t support mutating dynamic admission webhooks (e.g. cannot install sidecars like Dapr).

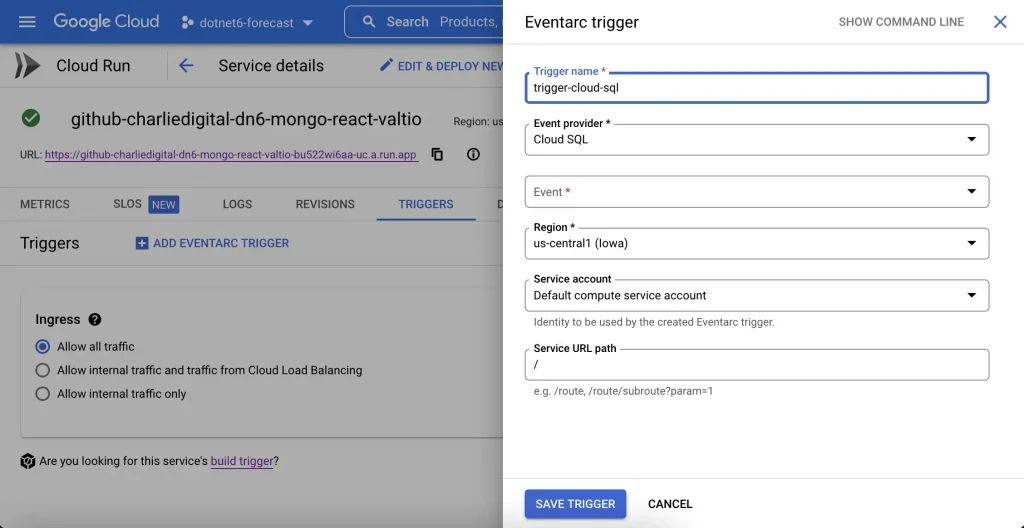

But GKE aside, Google Cloud Run (GCR) is one of the most accessible ways of running serverless workloads and the one that I recommend that every team explores before considering serverless functions or Kubernetes.

Compared to AWS App Runner, GCR offers true scale to zero. Azure Container Apps also scales to zero, but was released to general availability at the end of May while GCR is more mature and has been shipping features since late 2018.

Why GCR or serverless containers in general?

- Deploy serverless workloads using any language, runtime, or framework by just listening on a known port.

- Use any framework or middleware without having to hack it into a serverless function runtime model.

- Many of the runtime constraints of serverless functions are negated including short timeouts that limit the types of serverless workloads suitable for serverless functions

- Because GCR deploys and scales any container and simply forwards messages to a configurable, known port, it is easy to move the workload to any cloud and avoid lock-in; there’s really no special tooling involved.

- GCR only requires a

Dockerfileand the application to listen on a known port. This means that the local development experience is…well, just local development. No special tooling required! This is by far one of the best reasons to use serverless containers because the development experience is so much better compared to working with serverless functions as there is very little in the way of runtime constraints. - GCR even supports gRPC and websockets (with caveats).

Google Cloud Run is so easy to use and makes deployment of serverless, scale-to-zero workloads so friction-free that it simply makes every model of deploying compute feel unnecessarily complicated in contrast.

Pub/Sub, Cloud Tasks, and Cloud Scheduler

In AWS, container workloads can be separated into three classes:

- Web/backend services — these respond to HTTP requests

- Worker services — these are long-lived processes that poll for input

- Jobs — these are scheduled

Depending on when and how the work is executed, a team needs to decide which model to use and thus which underlying container compute service to deploy. While it seems to make logical sense to partition workloads and thus compute/deployment models for the containers, I see this as just unnecessary complexity since these are three abstractions of how (push vs pull) and when the compute action is executed.

While GCR has jobs in beta, its core competency is the first type of workload where a service responds to an HTTP, gRPC, or web socket message. So how can we map long-lived service type workloads into GCR? By using Pub/Sub, Cloud Tasks, and Cloud Scheduler.

Unlike the similar messaging services in AWS (SNS+SQS) and Azure (Service Bus), Google Pub/Sub implements a low ceremony, built-in HTTP push subscription. To achieve the same in AWS or Azure would require adding more infrastructure and/or more code just to move messages around to HTTP endpoints (much more in the case of AWS).

It might not seem like much on the surface, but Pub/Sub and Cloud Tasks are possibly the most powerful tools to help teams minimize sprawl and simplify application architecture because it allows building nearly all application logic as simple HTTP endpoints in Cloud Run with the combination of Pub/Sub, Cloud Tasks, and Cloud Scheduler managing the how and when the code is executed.

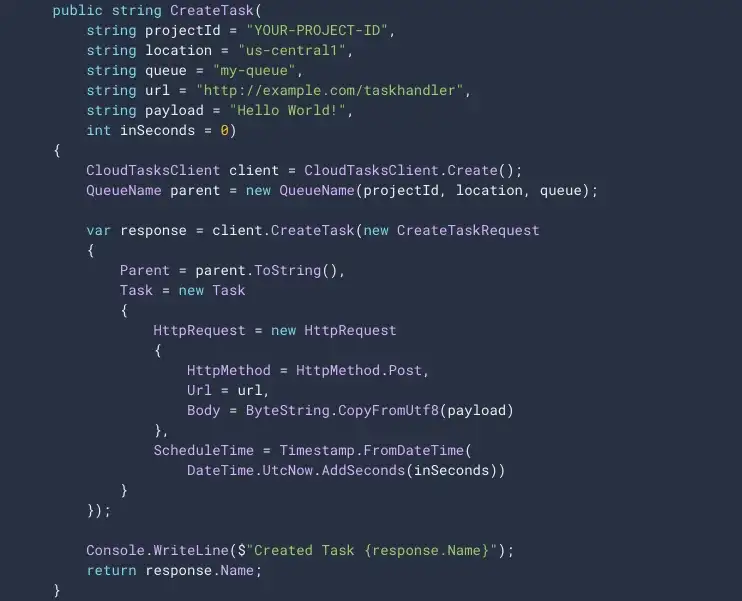

For cases where Pub/Sub’s ordering keys aren’t necessary, Cloud Tasks is even simpler, offering an incredibly elegant interface to invoke HTTP endpoints via a queue. Because Cloud Tasks even allows scheduling of the HTTP push (a temporal HTTP push), it is possible to build simple long running workflows just by scheduling a future HTTP request.

It even supports built-in rate limiting on the push side.

Recurring, scheduled jobs can make use of Cloud Scheduler to kick them off using, you guessed it, simple HTTP endpoints.

It may seem odd to fawn over such a simple feature, but this elegant paradigm allows a team to build entire systems on Google Cloud Run as HTTP endpoints using familiar languages (JS/TS, Python), frameworks (Express, Nest.js, Flask), and runtimes (Node.js, Python) and then rely on a really easy to use HTTP push model to manage how and when code is executed. This simplifies architecture decisions and effectively turns an entire application into webhook receivers.

With everything implemented as an HTTP endpoint in GCR containers, this simplifies deployment, operations, monitoring, scaling, and it also makes it exceptionally easy to test code using Postman or curl. It simplifies authentication since there’s only one model of service-to-service authentication needed when working with Pub/Sub.

I know what you’re thinking: “All of this is possible on AWS and Azure with this, this, and this!” Of course this is all feasible to do in AWS and Azure with more services and more code, but it is exceedingly simple in GCP because of how elegantly these services complement each other and how little glue is needed to make it work.

For SaaS startups with APIs at the core, I have found that both GCP and Azure offer compelling higher level abstractions that allow teams to move fast with low complexity. It is possible to build similar patterns on AWS, but the complexity of doing so feels significantly higher because the fundamental building blocks in AWS are lower level, leading to multiple first and third party tools that have been developed to address this complexity.

For an early stage startup, is it worth fighting that friction?

The combination of great documentation and generous credits pushes GCP ahead as a highly accessible cloud platform — without sacrificing room for growth — for young startups that do not necessarily have deep technical experience and are racing to product market fit.

Azure’s Functions and Microsoft’s dominance in first party tooling puts it close behind GCP for startups willing to make the leap to the Microsoft ecosystem.

AWS offers a lot of powerful and unique capabilities, but the lower levels of abstraction and higher connective complexity means that it is better suited for more experienced teams or teams that already have deep AWS experience.

Verdict

Use AWS If…

- You’ll need to scale your team with experienced engineers, fast

- You’ll benefit from a best-in-class third-party ecosystem and community

- Your team already has experience with AWS and knows how to wrangle the low-level, connective complexity and tendency towards sprawl

- You need access to unique services that have no parallels on Azure and GCP

Use Azure If…

- You’re comfortable with C# or TypeScript

- Your team can tap into the efficiency gained from deep first-party IDE integration

- You want the easiest way to build and deploy a static website/SPA+API

- Your use case requires complex data flows and you want the easiest way to manage that complexity

Use GCP If…

- You want the best experience working with containers — either serverless or orchestrated via Kubernetes

- The core of your product is an HTTP API

- Your team doesn’t already have deep cloud experience

- The ability to iterate quickly is key

The original article published on Medium.

發佈留言