By Harshal Rane.Oct 26, 2022

Google Cloud Platform recently released the Centralised Loadbalancers feature in GA. Using this new service now it’s possible to have multiple backends from different projects in a same organization. We will focus on this new feature in this blog but let’s first understand Loadbalancer features first.

What is Loadbalancer?

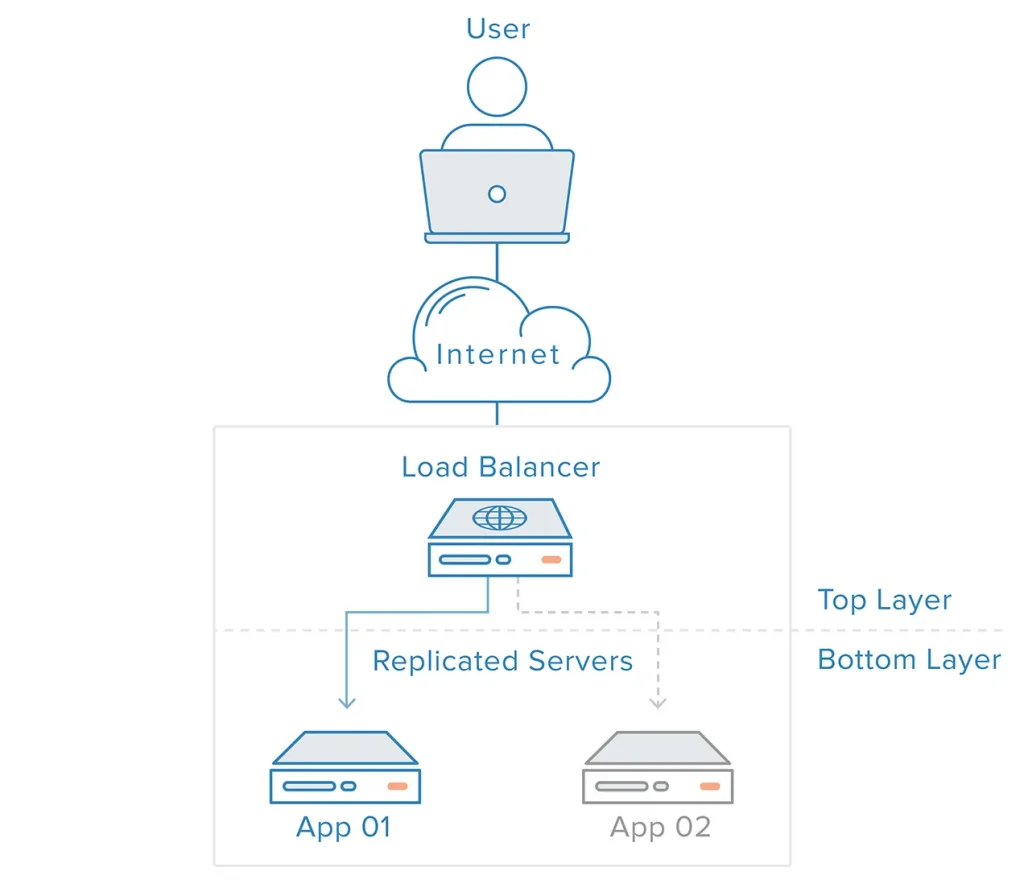

In Cloud-based architectures, Loadbalancers play a very important role to manage traffic to the servers deployed on the cloud. Load Balancers act as an entry point to user requests so the traffic load will not directly reach to server as Loadbalancer will distribute traffic evenly on all servers.

Some key advantages of Loadbalancer.

- Reduced downtime

- Highly Available

- Scalable

- Redundancy

- Flexibility

- Efficiency

Features of GCP Loadbalancer

Cloud Loadbalancing is a fully distributed, software-defined managed service. It’s a PaaS offering so users don’t need to manage any infra for Loadbalancer.

Cloud Loadbalancing is built on the same frontend-serving infrastructure that powers Google. It supports 1 million+ queries per second with consistently high performance and low latency. Traffic enters Cloud Load Balancing through 80+ distinct global loadbalancing locations, maximizing the distance traveled on Google’s fast private network backbone. By using Cloud Loadbalancing, you can serve content as per the user’s regions as GCP support for this service in over 35 regions.

- Single anycast IP address.

Single IP address for Loadbalancers frontend with support for multiple ports and SSL certificates. Multiregion backend failover is automatically available to ensure high availability. - Software-defined loadbalancing.

Cloud Loadbalancing is a fully distributed, software-defined, managed service for all handling traffic. It is not an instance-based or device-based solution its deployed globally to avoid single-point failure - Seamless autoscaling.

Cloud Loadbalancing can scale backend instances as user requests load increases and it can handle sudden traffic also which as it can scale the backend instances in matter of seconds. - Layer 4 and Layer 7 load balancing.

Supports Layer 7 HTTP & HTTPS based global Loadbalancer as well as Layer 4 TCP, UDP, ESP, GRE, ICMP, and ICMPv6 based Regional Loadbalancer deployments. - External and internal load balancing.

There are two type of Loadbalancers available in GCP Internal for handling traffic inside of GCP cloud and External for handling traffic coming outside of GCP cloud. - Global and regional load balancing

Distribute your load-balanced resources in single or multiple regions, as per users location we deploy Loadbalancer to for low latency and high availability. - Advanced feature support

Other features like CDN integration, cookie management & cloud armor support to protect the serices from DDoS based attacks.

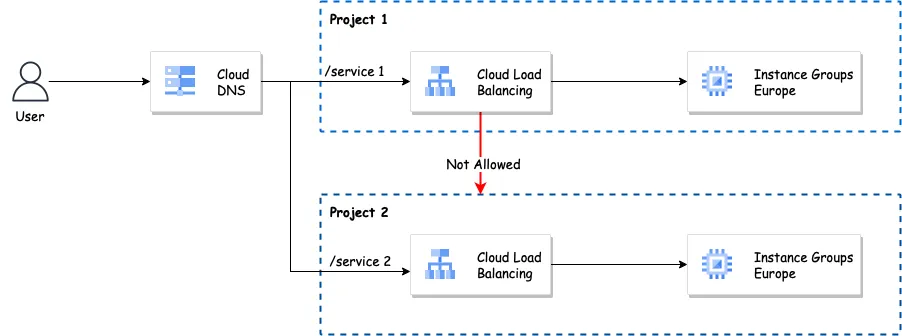

Old architecture of Cloud Loadbalancing

Previously if you have a scenario where you have to deploy a Loadbalancer in GCP it was not supported to access the services or backends which deployed in other projects. So to overcome this you have to create a separate Loadbalancer in another project with different IPs and domains and for a large team, it’s really difficult to manage it.

In the case of enterprise-grade deployments, this is real pain to handle because just for creating a connection between two projects there will be separate peering network needs to be set up. Plus it requires to reserve a separate IP address for each Loadbalancer followed by an SSL certificate. So here we can benefit from the newly released Centralised Load Balancer.

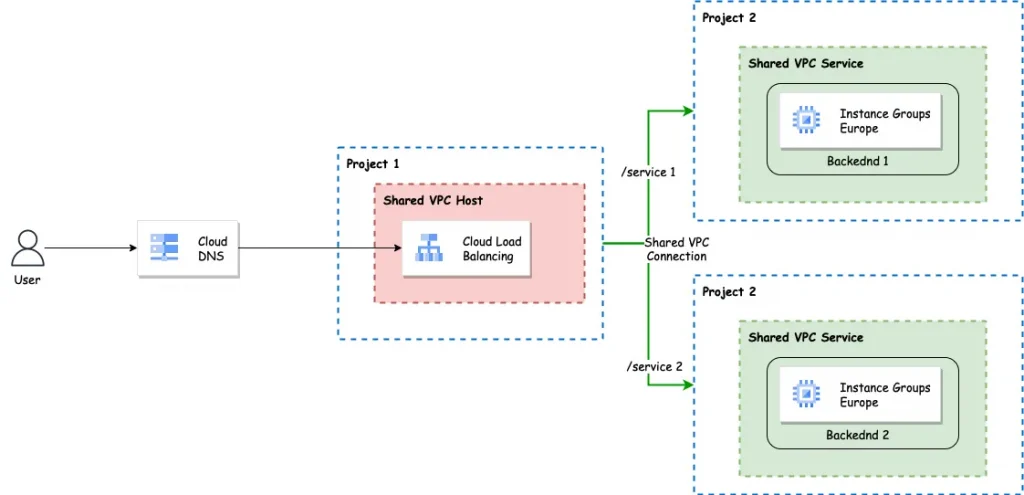

New Architecture of Cloud Loadbalancing

By using the shared VPC architecture and new regional Loadbalancer with Cross-project backend services we can overcome the issues for creating different Loadbalancers for each project. With a shared VPC network, you don’t have to worry about trying to link multiple VPCs or managing firewall rules for many VPCs.

But there are some prerequisites

- Need organization-based setup of GCP environment.

Link with detailed steps. - Two different GCP projects

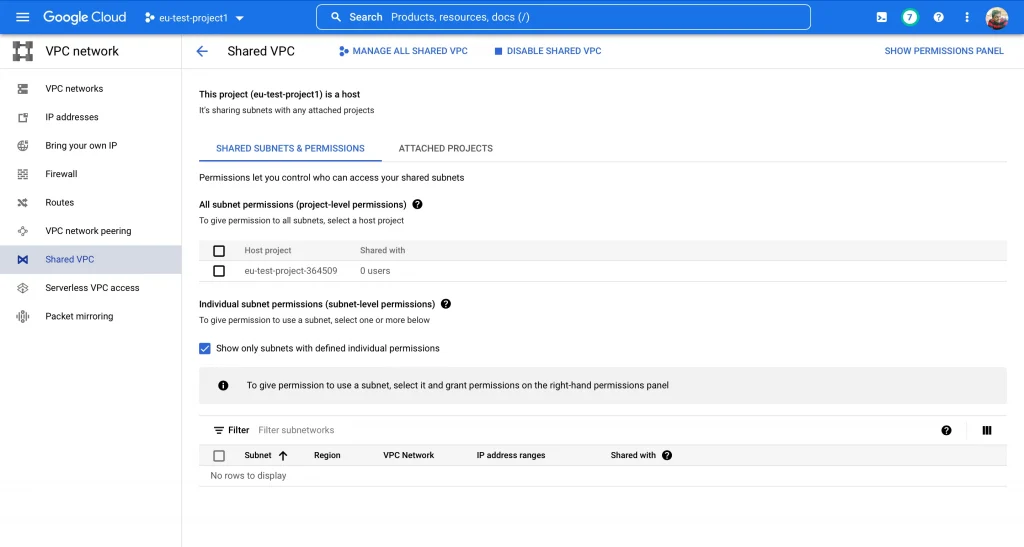

Link with detailed steps. - Shared VPC needs to be deployed with IAM permissions to the service project to access Load Balancer.

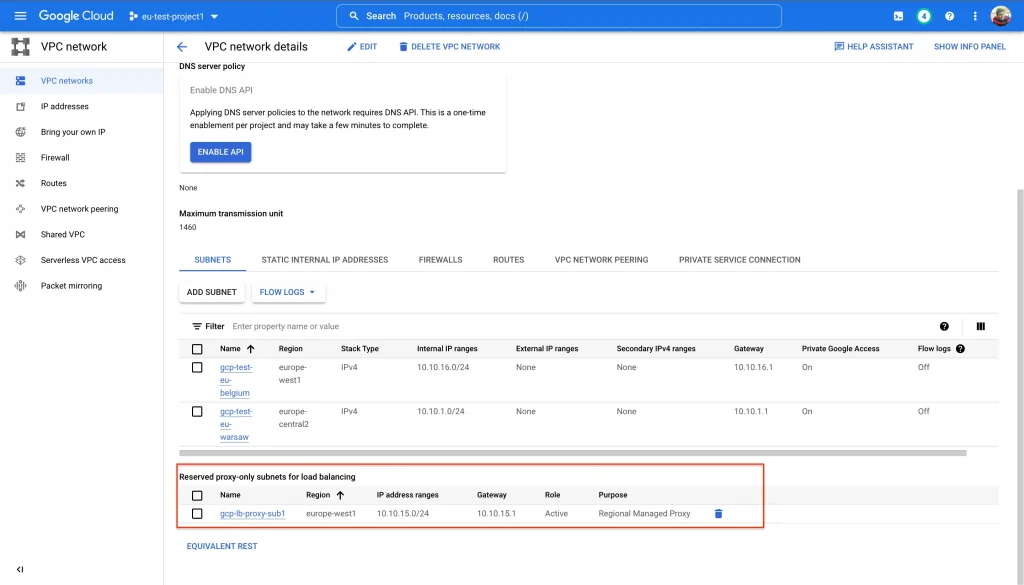

Link with detailed steps. - Proxy subnet:

The proxy servers implementing your regional Envoy-based load balancer need IP addresses, which will be allocated automatically from a subnet that you reserve for this purpose (and this purpose only) in region “europe-west1”. Each proxy will use its assigned IP address when connecting to the servers implementing your backend services.

Link with detailed steps.

Let’s Implement this solution, We have two projects

1) eu-test-project1 :

In this project we have deployed Shared VPC and subnets, so we can call this project a Host project.

This project also consist of VPC with Proxy-subnet for the Loadbalancer.

Also, this project will act as a centralized project to manage Loadbalancer configurations.

2) eu-test-project2

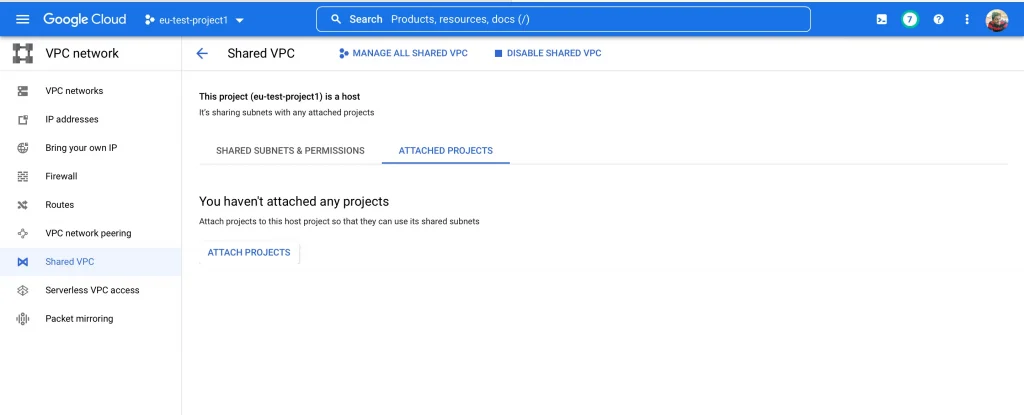

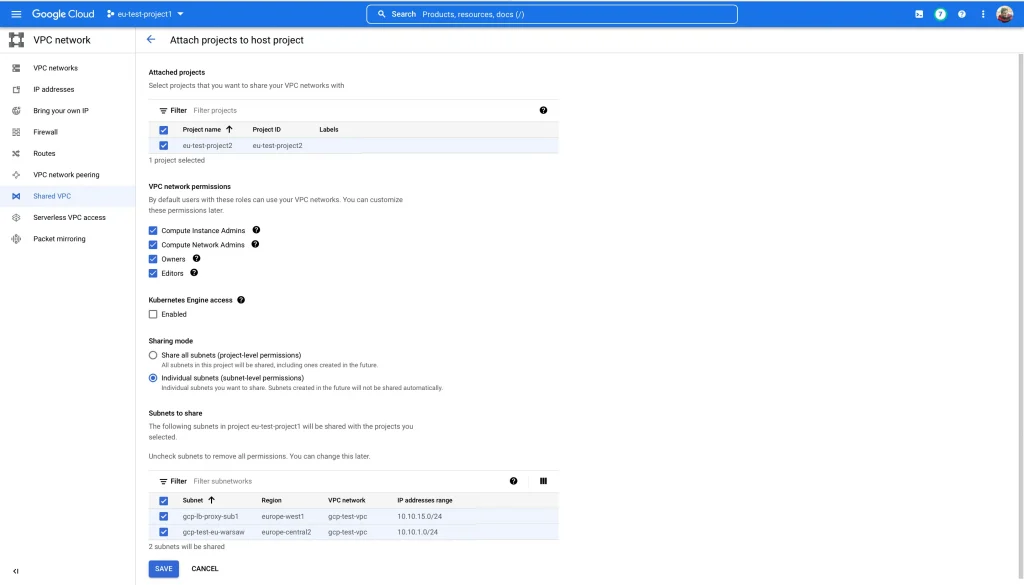

For this project “eu-test-project2” to be accessible to the Loadbalancer deployed in “eu-test-project1” we need to attach this project to the shared VPC deployed in “eu-test-project1”

While attaching this project to the shared VPC make sure you select proper permissions to the service project. Below are some available permissions

- Compute Instance Admins :

Principals with the Compute Instance User role can create Compute Engine resources (like VM instances) on shared subnets - Compute Network Admins :

Principals with the Network Admin role can create internal load balancers on the shared subnets - Owners :

Principals with the Owner role can create Compute Engine and load balancing resources on the shared subnets - Editors :

Principals with the Editor role can create Compute Engine and load balancing resources on the shared subnets

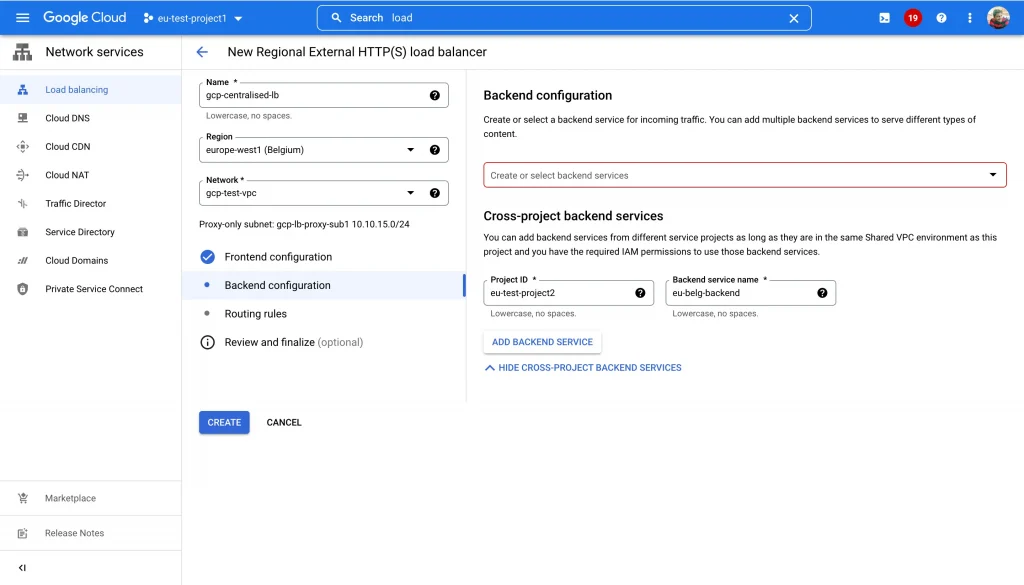

Once a project is attached to shared VPC we can add backend groups from “eu-test-project2” to the loadbalancers created in “eu-test-project1”

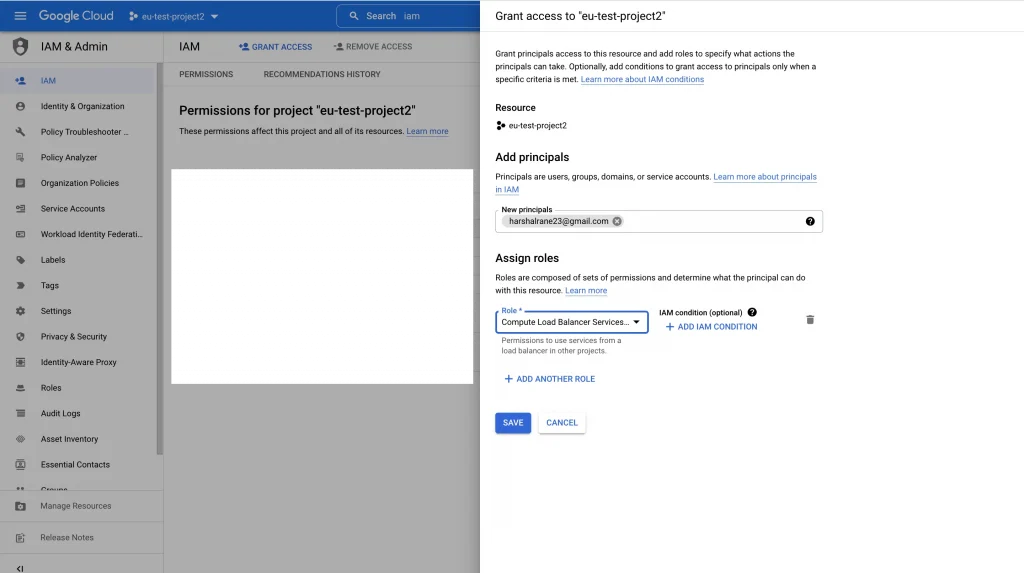

- Most important IAM access part in this setup

After creating the Backend service in the project. Make sure you grant IAM permissions to loadbalancer administrators to access your backend service from the host project.

For e.g: harshal is an admin who is going to handle Loadbalacer configs and needs to have “Compute Load Balancer Services User” Role. This role will allow using services from a load balancer in other projects.

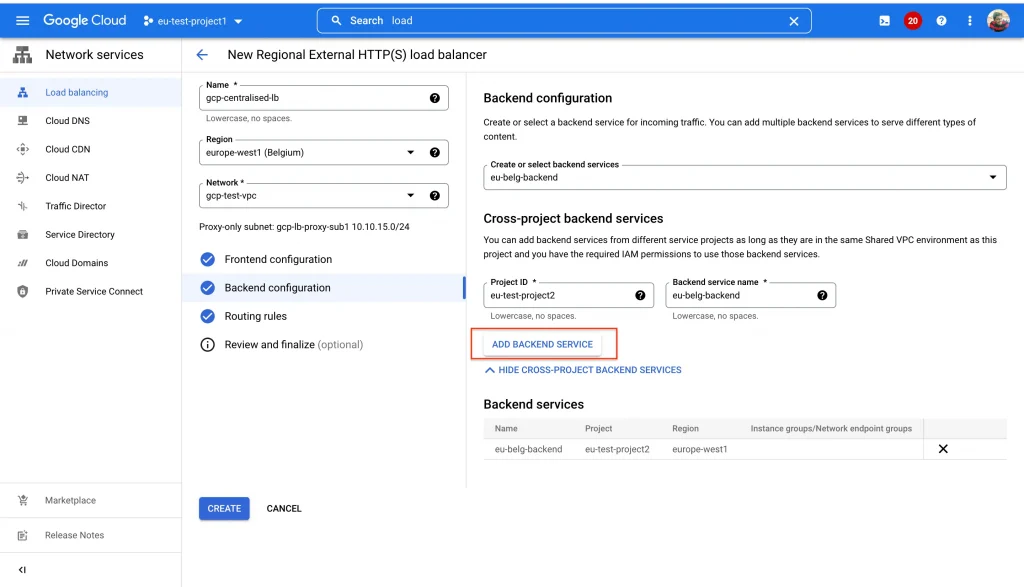

Setting up the Centralised Loadbalancer

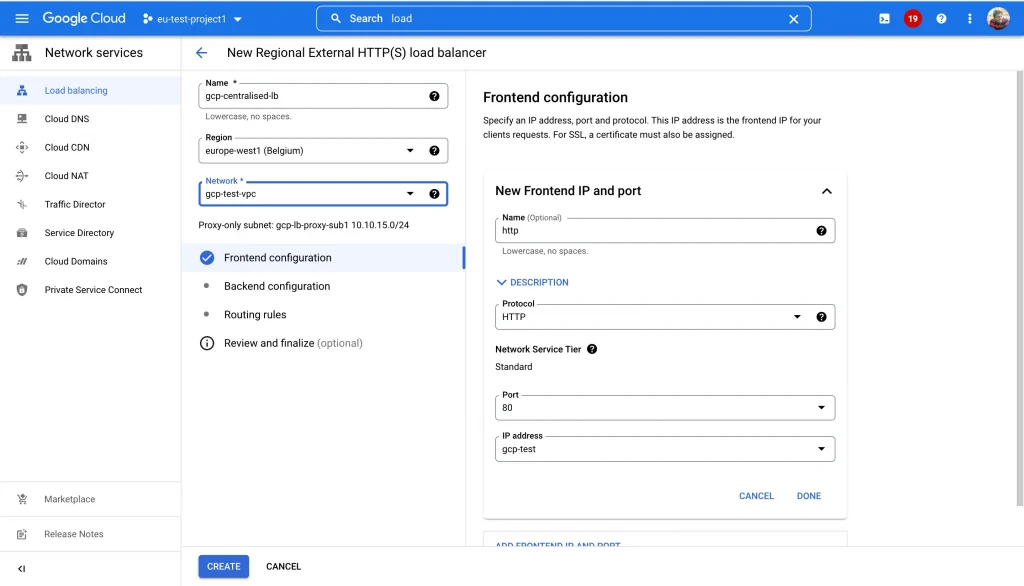

- Navigate to “eu-test-project1”, where we have previously setup the Shared VPC, Subnet, Proxy-subnet and firewall rules.

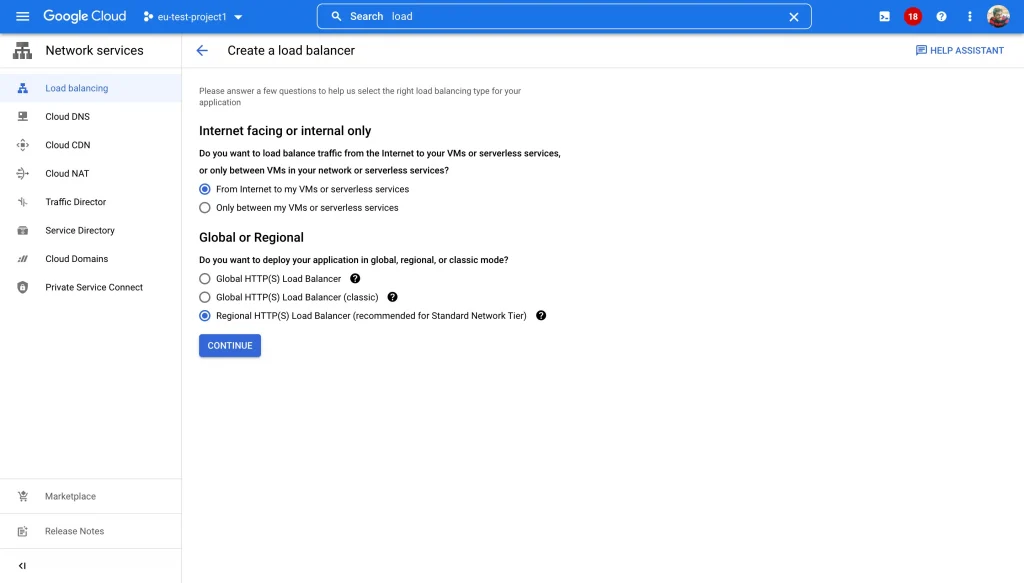

- Create a Regional HTTPS Loadbalancer which supports cross-project backend service. This feature is only available in regional Internal/External Loadbalancer’s.

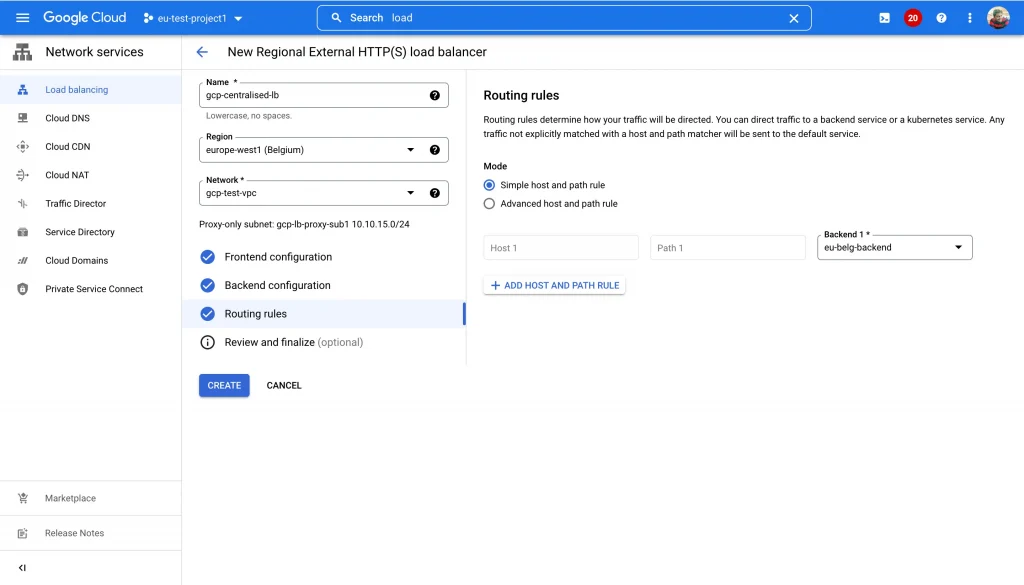

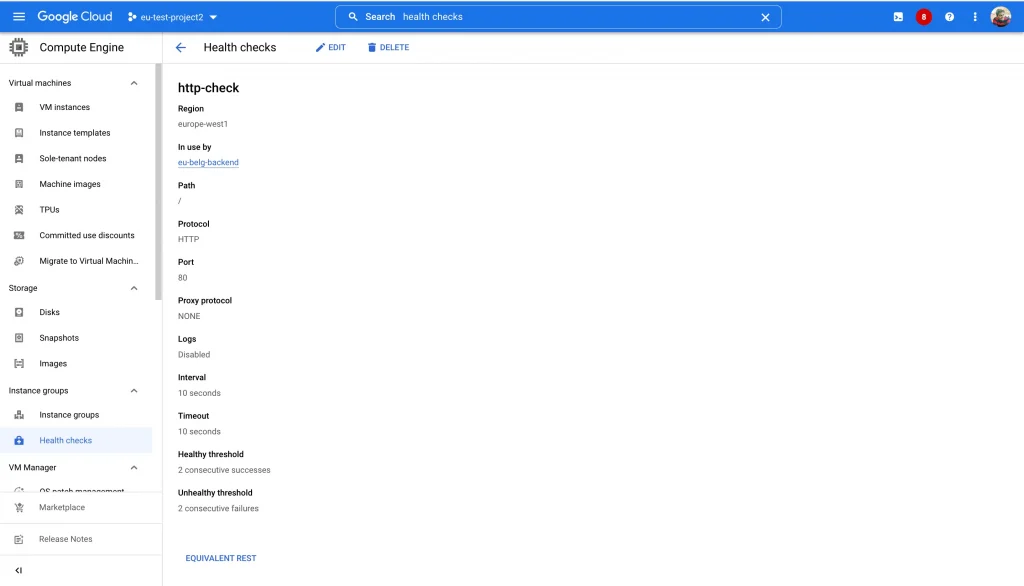

- Now let’s setup the Frontend, Backend, Healthchecks and Routing rules for the Loadbalancer.

- Use the following

gcloudcommand to create a firewall rule that allows incoming TCP connections, from Google Cloud health check systems, to instances in your VPC network. Otherwise, Healthcheck will fail and backend will result as unhealthy.

gcloud compute --project=project-id firewall-rules create allow-firewall --direction=INGRESS --priority=1000 --network=gcp-test-vpc --action=ALLOW --rules=tcp:80,tcp:443 --source-ranges=35.191.0.0/16,130.211.0.0/22

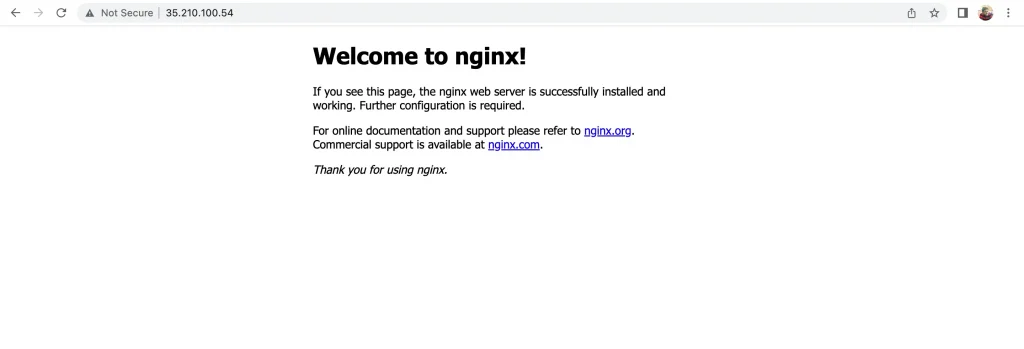

- Test the Loadbalancer setup by accesing IP address with port 80. We can access the backend instance from another project.

- We can add this IP address to DNS zone and map with domain name to access our Loadbalancer via that domain address.

Summary

- Best supported feature for enterprise deployments where each team has its different project and backends. Using this we can map all backends behind single URL.

- No need to setup Loadbalancer in each project and setup different IP addresses for each. As each external IP address costs $0.01 even though it’s unused.

- Centralised network management using Shared VPC and firewall rules.

- This feature is only available in regional Internal/External GCP Loadbalancer’s.

References

- https://cloud.google.com/blog/products/networking/cloud-load-balancing-gets-cross-project-service-referencing

- https://cloud.google.com/resource-manager/docs/creating-managing-organization

- https://cloud.google.com/vpc/docs/provisioning-shared-vpc

- https://cloud.google.com/load-balancing/docs/proxy-only-subnets

GitHub

https://github.com/HarshalRane23

Questions?

If you have any questions, I’ll be happy to read them in the comments. Follow me on medium or LinkedIn.

The original article published on Medium.