By guillaume blaquiere.Oct 28, 2021

Serverless is a game changer in the cloud and in application architecture. I’m a big fan of this technology that abstracts the server layer, and all the toil that it implies: monitoring, redundancy, patching, scaling,…

However, you have less control on the low level infrastructure. In a previous article, I demonstrated that another serverless product, BigQuery, had different processing performances according to the region; and mainly correlated to the region age. Cloud Run and Cloud Functions being also serverless product,

Are there performance differences among regions also with Cloud Run and Cloud Functions?

Testing protocol

Here again, I focused my tests only on the processing performances. For that, I used the Fibonacci algorithm (in recursive mode) to test the CPU processing speed. Cloud Run and Cloud Functions use the exact same algorithm.

You can find the Cloud Run code and the Cloud Functions code on GitHub

Deploy the test environment

You can reproduce the tests on your side. For that, you can deploy your environment by running the deploy.sh file. It deploys the code on all available regions for the product (slightly more on Cloud Run than on Cloud Functions).

Cloud Functions and Cloud Run are both deployed with 1vCPU and 2Gb of memory to be able to compare them at the end.

In addition, there is no special optimization on the Cloud Run packaging. I used Buildpack packaging alpha feature on Cloud Run. It’s also Buildpack which is used to build Cloud Functions behind the scene.

Run the tests

test-fibo.sh performs the same request on each deployed region. I chose to compute Fibonacci(47) because it takes between 10 and 15 seconds to compute and minimize/hide the cold start (very low with Golang application) and request traveling latency (you can convince yourselves by looking at the results. I’m located in France, and some Asia and US regions have very good performances, and some in Europe are not so good).

I ran 3 times the same test to have an average processing value of each region.

Clean up

You can run a clean up with the script destroy.sh. This will remove the service deployment. Even if it costs nothing to let unused service on your project, it makes noise in your project!

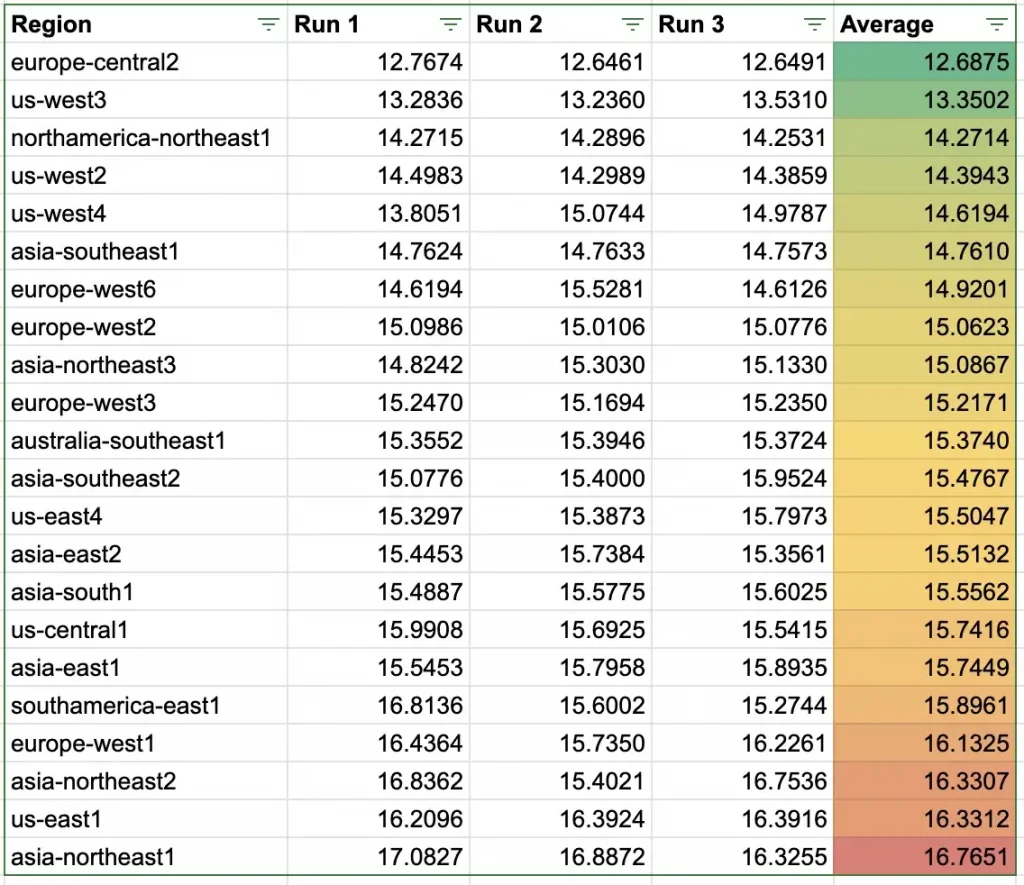

Cloud Functions performances per region

First test: Cloud Functions. Here, the performance result after 3 runs.

Wow, I didn’t expect such a result! We can observe 4 seconds between the slowest and the fastest region, 30%!

My assumption with BigQuery was that the age of the region was correlated to the performances. Let’s have a look at that for Cloud Functions.

This time, it’s not so obvious. Sure, it’s true for some regions, especially the oldest ones seem to be the slowest, and us-west3 and europe-central2 confirm that the younger the faster.

But asia-northeast2 and asia-southeast2 are counterexamples.

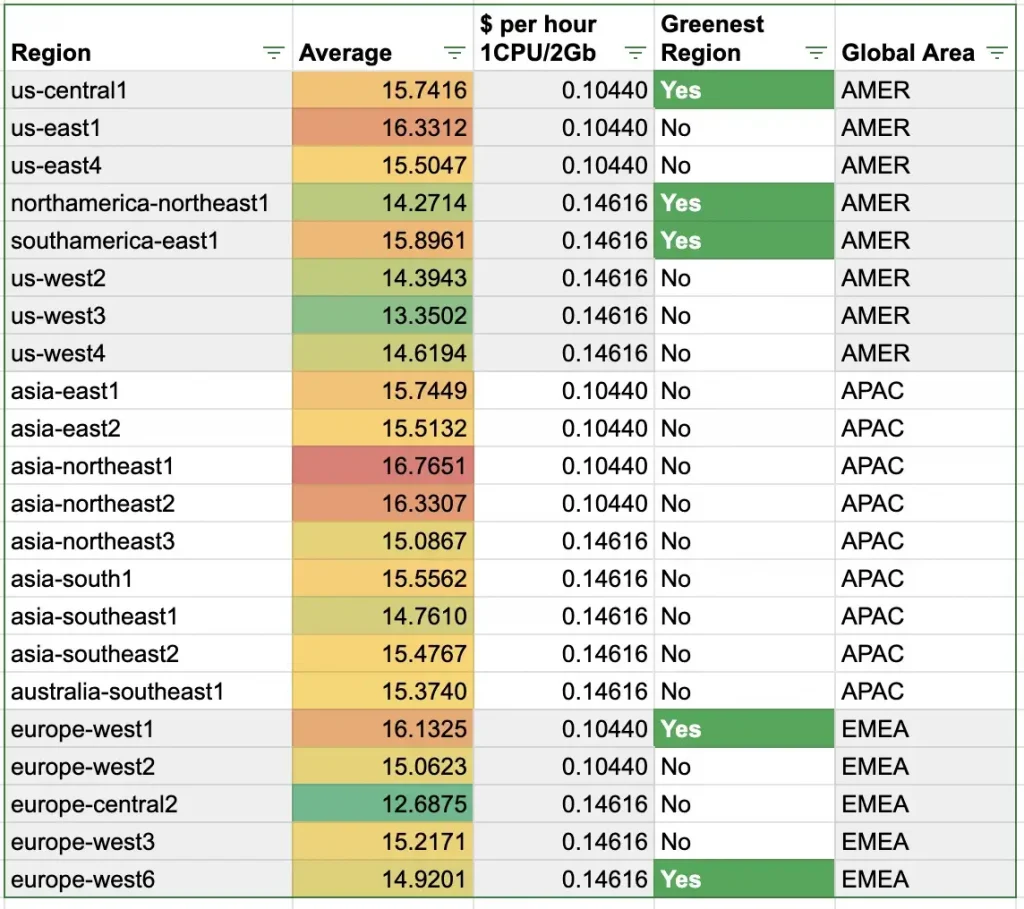

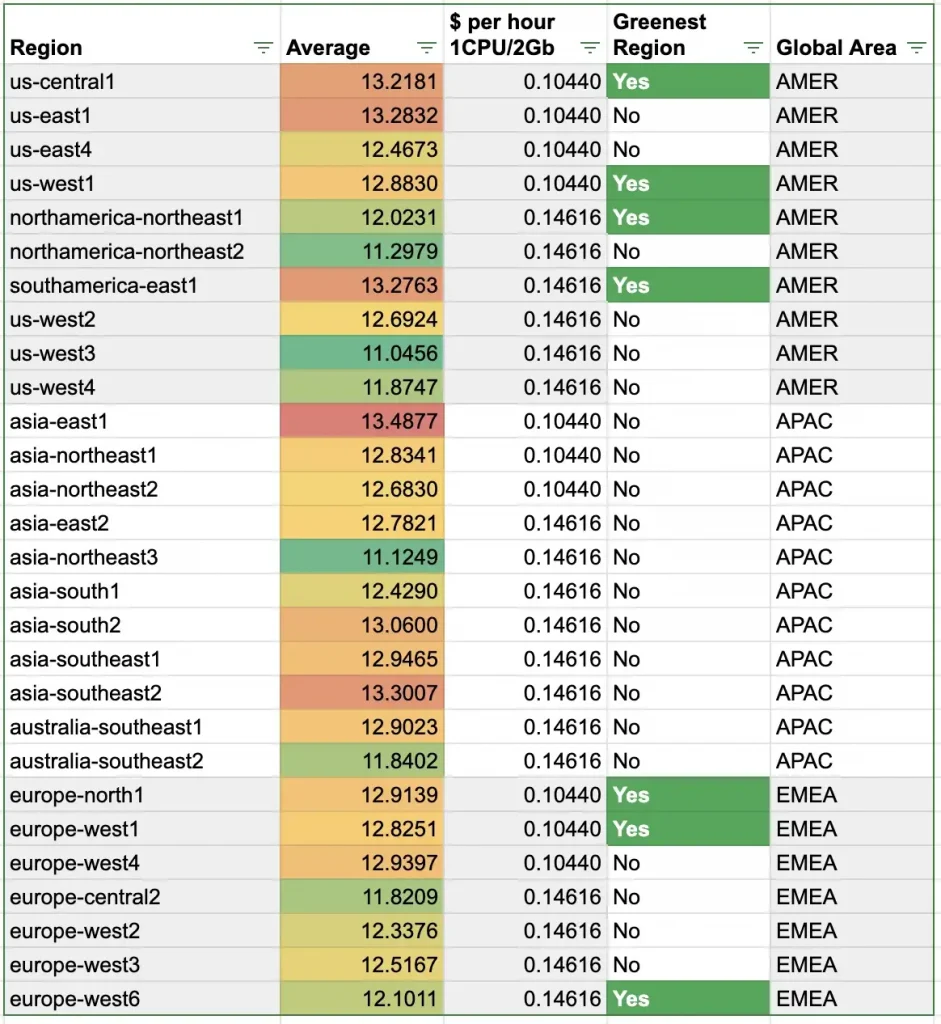

Final comparison, to make the right choice, we must know the region cost and the CO2 impact of the regions, to have the capacity to choose the fastest, cheapest and/or greenest region.

Here, the results are consistent with BigQuery. Highest price offers globally better performances than the lowest price.

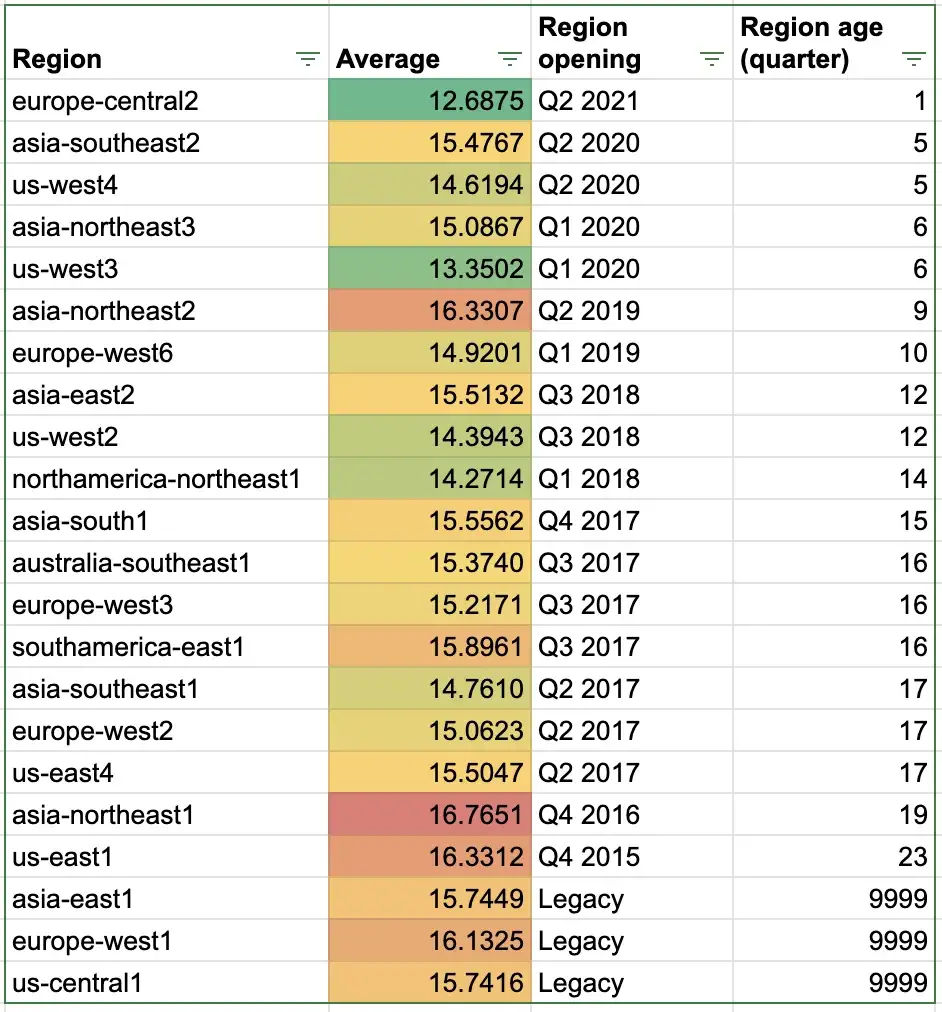

Cloud Run performances per region

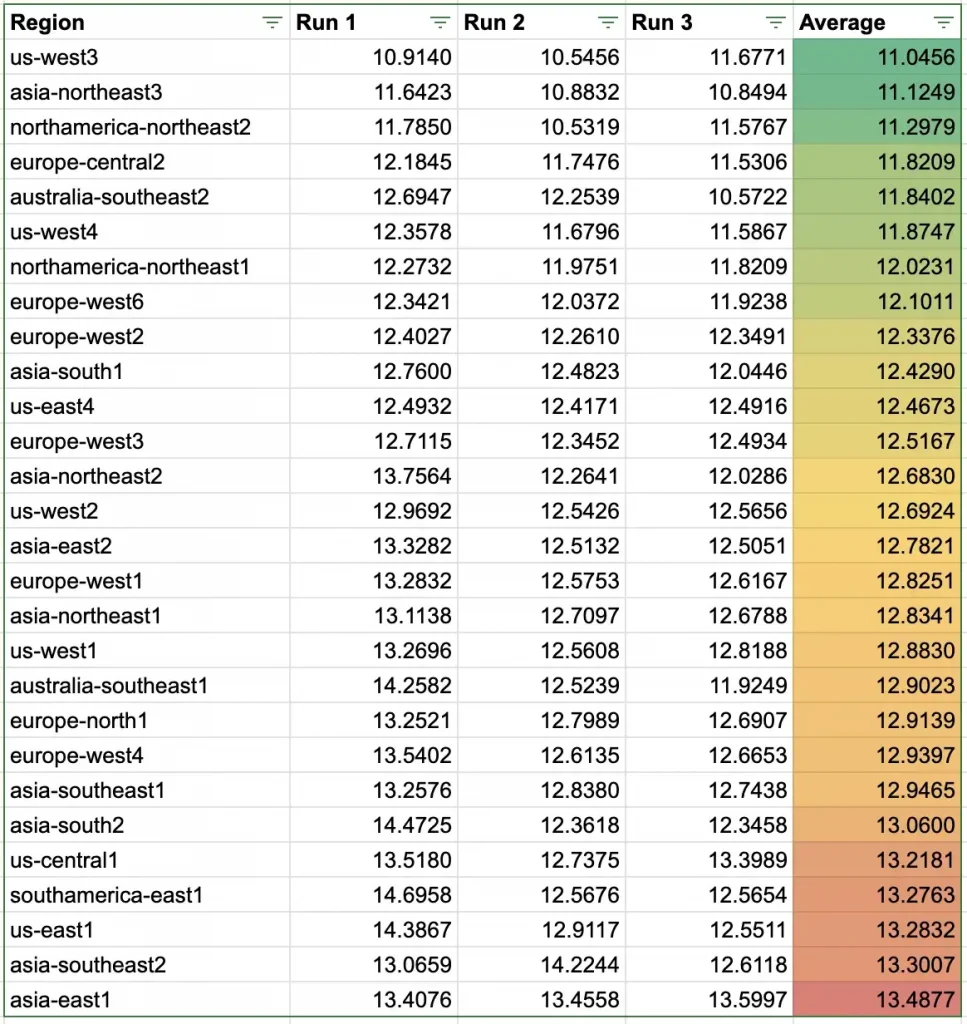

Then, Cloud Run. Here the performance result after 3 runs

Here again, there is a difference up to 2.5 seconds between the slowest and the fastest region, 20%.

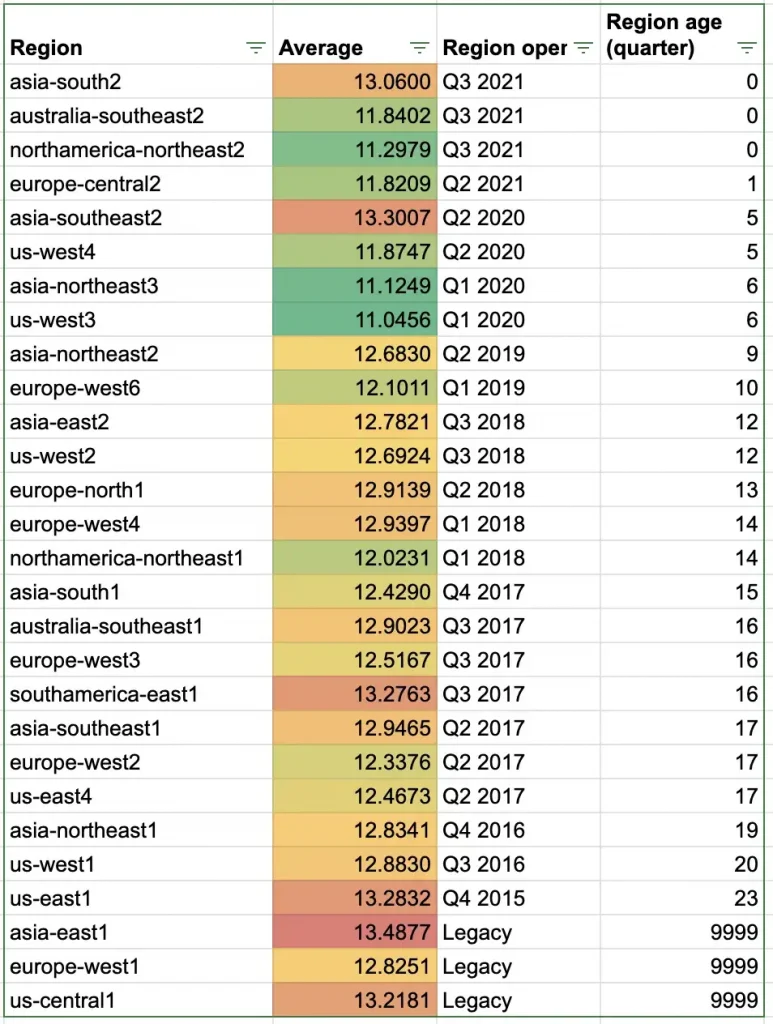

Now, let’s correlate that with the region’s age.

Globally this time, the younger, the faster, but exceptions exist: asiasouth2 and asia-southeast2 are recent regions and among the slowest in this test.

The last table to make the right choice among the fastest, cheapest and/or greenest regions.

Hopefully, here again, the rule “the slower the cheaper” is, again, enforced.

Cloud Run VS Cloud Functions performances

Because I ran the tests in the exact same condition, i.e. with:

- 1vCPU and 2Gb of memory on both platforms

- The exact same Fibonnaci code

- The same container packaging engine (buildpack)

I found it interesting to compare the performance of the 2 serverless products.

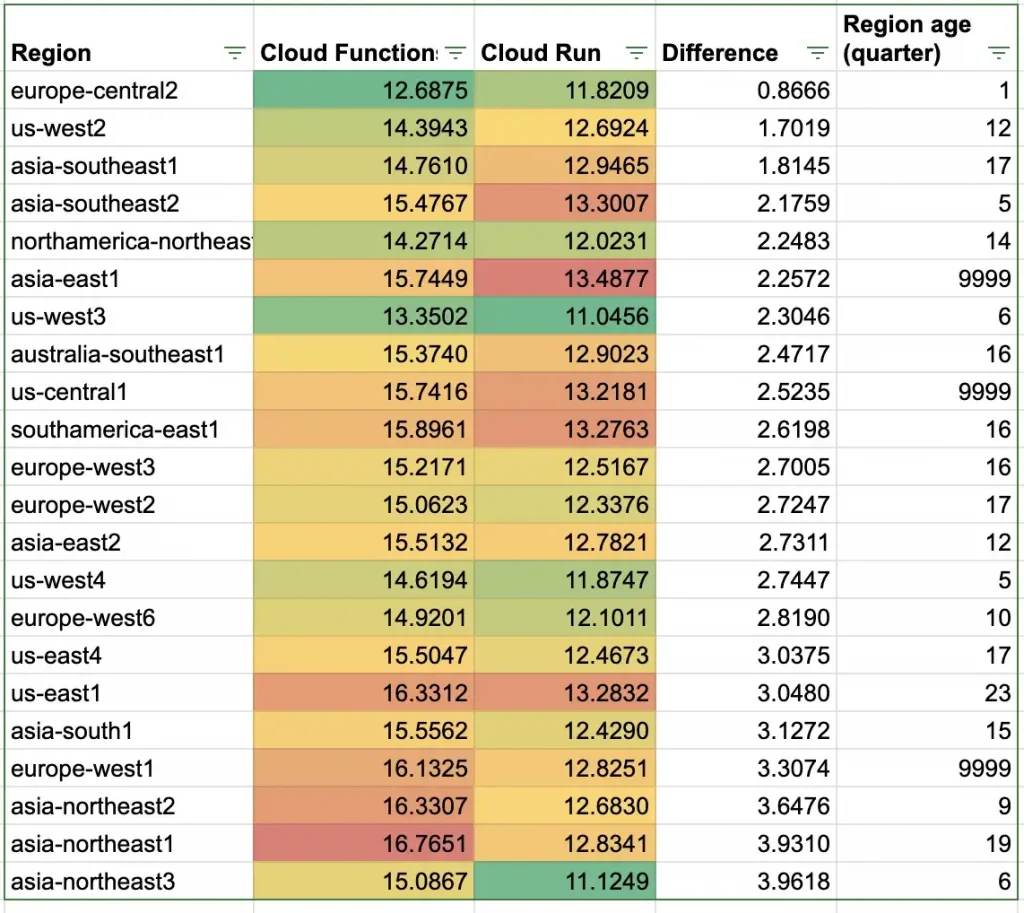

I kept only the common region and sorted by the performance difference between Cloud Functions over Cloud Run.

Surprisingly, the regional performance color ranking of one product doesn’t presuppose the performance of the other in the same region.

Except for europe-central2, northamerica-northeast1 and us-east1.

In addition, there is up to 4 seconds execution time difference between Cloud Function and Cloud Run.

It’s surprising because the 2 products, from my understanding, share the same underlying infrastructure and I didn’t expect these results.

- Is the automatic packaging of Cloud Functions that slow the execution?

10 to 30% of loss is too high just for a packaging reason. - Is the product age?

It’s my best assumption but the “share underlying infrastructure” isn’t fully accurate, or at least, not for the “compute” (CPU/Memory) part; but can be true for network,security, scalability management(…) parts.

Region and performance impacts

Serverless really means what he means: you don’t manage the underlying infrastructure, server types, CPU generation, network performance,… And therefore, you are dependent on the datacenter upgrade and version.

Compared to BigQuery, where the cost was the same even if the processing time were slower, here, I’m more worried about these results. Indeed, in a slow region, your penalty count twice:

- The processing time is longer, and because you pay for the CPU/Memory time that you use, you will pay more

- The user experience is impacted by an higher latency (In reality, on not compute-intensive requests, the difference is invisible for the end users)

In addition, the performance difference between Cloud Functions and Cloud Run is a call to use only Cloud Run in any cases; The cases where Cloud Functions has the advantage on Cloud Run being very rare now.

However, keep in mind that Google Cloud can update its data centers hardware at any time, without notice and warning, and the current result can be wrong in 3, 6 or 12 months!

Don’t rely on the infrastructure, it’s serverless!

You can find the code to run the test yourself in my GitHub repository.

The original article published on Medium.