By guillaume blaquiere.Feb 10, 2022

Managed services is a new paradigm that lets the cloud providers manage the infrastructure and the network for you. You only have to focus on the business values to build on top of it, you pay only the instances that are managed for you.

In its perfect form, managed service becomes serverless service, a pay-as-you-use solution. You haven’t to worry about the infrastructure and the cost: if you don’t use it, you don’t pay for it.

The Google Cloud proposition

Google Cloud proposes a ton of serverless products, some are unique and without any equivalents on other cloud provider platforms.

- App Engine, released in 2008, was one of the first serverless products, long before the popular AWS Lambda. Up to today, the competitors have no real equivalent to App Engine (in pay-as-you-use mode)

- Cloud Run, a serverless container as a service platform, also without any equivalent (in pay-as-you-use mode)

- PubSub, a global messaging service where you never worry about the location, it’s simply global!

- BigQuery, Firestore, Cloud Scheduler, Cloud Workflow, Cloud Task, … and so many others

And a serverless relation database, something similar to AWS Aurora? Nothing!!!

What?? nothing for relation databases? If you want one, you have to use Cloud SQL, nothing else.

And it’s not a pay-as-you-use, it’s “only” managed and the smallest instance (1vCPU, 3.75Gb of memory) is about $50 per month!

I asked Google Cloud many times in the past years about a pay-as-you-use relation database service, and the answers were always No, associated with strong and hard-to-overcome arguments, but…

The use cases

But, when you have large developers teams, with one Google Cloud project per developer, do you really want to pay $50 per month and per developer to test a few deployments per day/week?

And do you really want to keep a server up and running, to burn power and generate CO2 for a few tests per day/week?

$50 is not so much for large companies and dev teams, maybe, but $50 is too much for a useless use case.

But for other companies, like associations, with many volunteers and with a low/no budget, $50 per month is too much!

Common expectations

Those use cases lead to save money and have the same expectations, or more exactly “non-expectations”:

- The service can fail time to time

- The service performance can be low, at least at the startup (when you turn the instance to life, the “cold start”)

The Cloud Run solution

Cloud Run is a flexible service to host almost anything. You deploy a container and pay-as-you-use it; if you don’t use it you pay nothing, if you have a lot of traffic, it scales to hundreds of parallel instances.

However it comes with those 2 constraints:

- Cloud Run serve HTTP traffic only

- Cloud Run is stateless

Is Cloud Run really the solution??

When you have a look to a relation database engine, like MySQL, you have those requirements:

- Serve request on TCP communication

- Your data persisted.

The perfect Cloud Run opposite!!

The Cloud Run richness

Cloud Run is container-based and offers a lot of flexibility. But the true richness is its powerful features. With them, you can go beyond the usual serverless limits, and unleash your imagination.

To use Cloud Run, we have to solve 2 issues:

- Persistent Storage

- TCP network communication

Persistent Storage on Cloud Run

Still in preview, the gen2 execution runtime comes with great features

- Non sandboxed runtime environment

- The capacity to mount network volume.

This latest feature allows new use cases on Cloud Run. Even if it comes with limitations and won’t be really used in the solution, it’s worth to know it and to have a try when your use case required it

So, for the serverless database, the idea is to use Cloud Storage storage through GCSFuse mounting point, or to use Cloud Filestore, a managed NFS solution on Google Cloud.

The GCSFuse limitation

Cloud Storage is blob storage and the features, and capacities, are different to block storage (usual disk in any computer). In addition, Cloud Storage is only accessible by API (JSON or XML).

However, GCSFuse is a tool that wraps the linux system disk access into HTTP call. Like that, it’s possible to mount a bucket in a linux environment and to use it as a usual directory.

That wrapper transforms block storage access into blob storage and there are, of course, limits and unsupported features.

First of all, the latency is low, because GCSFuse performs HTTP API calls and it’s much longer than native file system calls.

But low performance for non critical or test databases isn’t a concern. The issue is elsewhere

The issue comes from a specific feature required by MySQL to optimize the read and write in storage files, like keeping a file open and stream read/write in it.

It’s not possible with Cloud Storage, you can’t partially update a file. And GCSFuse doesn’t support that kind of usage

The MySQL logs are clear: Storage is not usable. And it exits in error. That solution isn’t the right one.

The Cloud Filestore show stoppers

The other option to mount a storage system on Cloud Run gen2 is Filestore. It’s a managed NFS system and it works well. The disk access is fast and the the Filestore instance can be snapshoted to backup the data.

However, there is 2 main issues:

- Firstly, Cloud Filestore instances are only accessible on private IP in the project VPC. So, no problem, you can use a serverless VPC connector to bridge the Cloud Run instance with your project VPC. However, it costs about $17 per month and it’s not serverless (always on)

- Secondly, Filestore price isn’t so expensive, $0.2 per Gb. Totally fair. The problem comes from the minimal capacity of Filestore instances: 1Tb, thus $200 per month.

This great solution isn’t financially sustainable (minimum $217 per month) and can’t be chosen to save money!

However, I still released a container version with that option.

The final choice

In the end, mounting a file system on Cloud Run has not been a successful/sustainable solution. The fallback solution is an old school solution:

- At startup, download the data from Cloud Storage and load them in memory

- At shutdown, when the Cloud Run instance gracefully stop, upload the data to Cloud Storage.

For a better efficiency, the data are compressed and stored into a single file on Cloud Storage

The solution has the advantage of working with gen1 and gen2 runtime environments. In addition, it’s possible to activate the versioning on Cloud Storage to keep different versions of the database, like snapshots, with the capacity to restore them.

However, that asynchronous data persistence implies edge cases and higher risk of data loss

- In case of crash of the container (out of memory for instance), the data updated in memory by the database engine are lost.

- When you deploy a new version of the Cloud Run serverless database, the new instance created with the new version will download the data from Cloud Storage, whereas the data updated on the previous version are still in Cloud Run instance memory and not yet uploaded on Cloud Storage.

Those points can be mitigated in future versions.

TCP communication on Cloud Run

The other blocker to overcome is the communication protocol. The MySQL database uses TCP, and Cloud Run only HTTP.

However, Cloud Run supports HTTP/2 bidirectional streaming. That’s the key to solve the issue but requires TCP communication wrapping into HTTP/2 protocol.

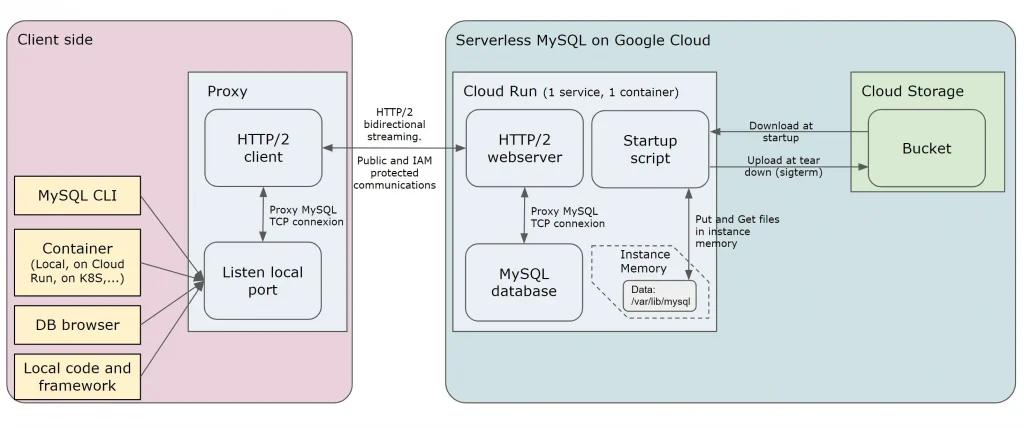

On the server side, the Cloud Run service where the MySQL instance lives in background, a HTTP server is exposed. When a connection comes in, it creates a TCP connection with the MySQL database and streams the communication over HTTP/2.

On the client side, on the developer environment or on other services (on Cloud Run or elsewhere), a proxy is required to wrap the TCP communication of the client into a HTTP/2 request.

The first request opens the connection, and then the traffic is HTTP/2 streamed. This first request can also include a security header to reach IAM secured Cloud Run and therefore restrict the access to the database to only the authorized accounts.

Packaging and startup

Cloud Run offers the agility to configure the container runtime. That flexibility allows assembling all the pieces and to control the container startup with a script that do (in GCS mode)

- Download the data from Cloud Storage

- (Configure the SigTerm (graceful stop of Cloud Run) function callback to compress the database files and send them back to Cloud Storage)

- Extract the data and load them in the container memory

- Start MySQL in background

- Start the webserver

Here, the global architecture of the solution

Can I use it?

The final solution is available in open source. You can deploy a container on Cloud Run, with a few configuration, and have a try with Cloud Storage or Filestore solution.

You can have a look to the code on my GitHub repository, there is no real logic, mainly network communication wrapping and container tricks with dumb-init to manage sigterm of graceful stop notification of Cloud Run.

You can use it with in mind the limits and the risks that you can have on your data. For the majority of the non-critical use cases, there won’t be an issue. If you start to have too many problems, or too many difficulties, it’s maybe because it’s time to switch to a true managed solution: Cloud SQL

Anyway, a MySQL serverless and persistent database can be a reality on Google Cloud and, I still have this serverless dream….

If I do it, Google Cloud can do better!

I made that development for the “Google Cloud easy as a Pie serverless hackathon”, with absolutely no ambition to win, only for the challenge.

Have a look at my (fun) submission video!

The original article published on Medium.