By minherz.Dec 10, 2022

Actionable events is a very useful tool when operating production systems. A common example of the usage is an ability to restart VM instances which CPU utilization reach 99% for longer than a minute. Depending on the infrastructure or a cloud provider, events can be implemented as some kind of triggers that bound to the executing script, encoded into the service (e.g. the action can be provided at the time of VM instance creation) or described as the system alerts that feature customizable reactions. Many other types of implementation are possible. For example, see CloudWatch Events in AWS. The main challenge is usually to understand the terminology, so you can understand how you identify the event and then how you bind your implementation to that event, so it will be triggered under expected conditions.

In Google Cloud

Google Cloud is built by engineers for engineers. It means that you can implement any thing you need as long as there is a public API for it. However, it also means that sometimes you will spend time searching for that API and then figuring out how to use it. This is the case with actionable events which in Google Cloud are called alerts.

In Google Cloud you define alert policies on metric or logs data using threshold alerting, data absence, SLOs, Uptime checks, and more advanced operations such as rate of change and aggregations using the MQL — the monitoring query language. Alert policies can be as simple or complex as required by your team, and then using filtering and grouping to combine the data into the desired set. The alert policy contains the condition for triggering the alert, the notification channel(s) where to notify you, and the documentation section to include any dynamic data and links to dashboards, playbooks, and more for faster troubleshooting. Each time the policy’s conditions are met, the system creates a new incident. For implementing the actionable alerts you need to define the “right” notification channel that allows you “to take an action”.

Implementing on-alert action

There are three methods you can use to execute an action in response to the triggered alert:

- Define the Cloud Build trigger.

- Define the Cloud Function.

- Define a REST API endpoint that will run your logic.

First two can be triggered using PubSub or Webhook notification channels. The last method is triggered only by Webhook. I would recommend using Cloud Build because it (1) uses declarative language; (2) the configuration is easy to create, maintain and control; (3) being serverless service it is cheap in maintenance and (4) being integral part of Google Cloud it let you do anything that you can do using Google CLI or API. But if you need a some kind of proxy so you can trigger execution of, say, Terraform plan in Terraform Cloud, then the second or third methods are for you.

You can find the JSON schema of the payload sent within the PubSub message or HTTP request in the documentation.

Describing event’s conditions in the alert policies

This is the part where an “engineer in you” is important. User experience in defining events using the alerts in Google Cloud is not great today. Basically, to be able to define a meaningful alerts you will need to write your own MQL which is, yes, another scripting language. Let me give you few tips about UI and MQL resources that you can use.

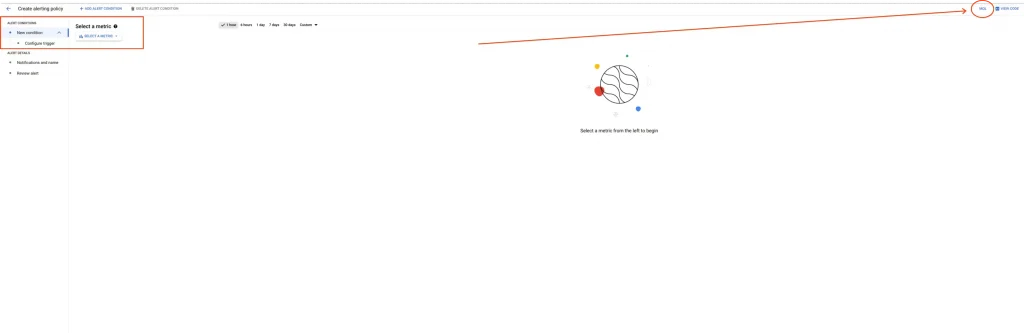

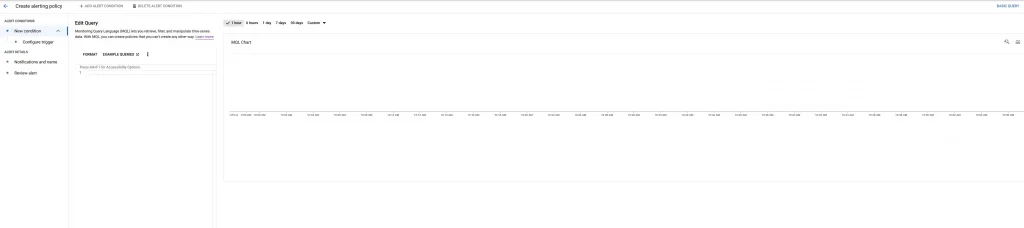

When you create a new alert using Cloud Console you find yourself in the multi-step wizard-like interface

It limits you to selecting a single metric for querying and then lets you define the threshold for alert. It can be enough if your event is “CPU utilization reach 100%”, but is not enough if you want to catch an event “when the rate of responses returning 200 status code to total number of responses is less than 90%” or something similarly sophisticated. In these cases you can change the UI to allow you to write MQL in the wizard by pressing that small MQL button in the top right corner of the window (see the red arrow in the previous screenshot). You will get a slightly different look of the wizard window then:

Now to the hard part. Almost all complex alerts use some kind of ratio of the metrics. It is implemented using ratio operation. Description of other methods to reach the same goal can be found in the MQL examples. Using MQL you should be able to define MQL query that describes your event although the documentation might not be comprehensive for your specific case. If you find yourself in a challenge, feel free to ask the question in the dedicated forum. In the following section I show how to do it for the specific user story.

After you built the query to see the data that captures your event you can define one of two conditions that finalize the event’s description and triggers the alert:

conditionoperator lets you describe threshold condition that fires the event or, in case of Google Cloud, opens an alerting incident.absent_foroperator lets you describe an event that should be triggered in the absense of the condition. It is useful when you want to describe an event that does not have the data. For example, any response from your deployed application or any query to a database.

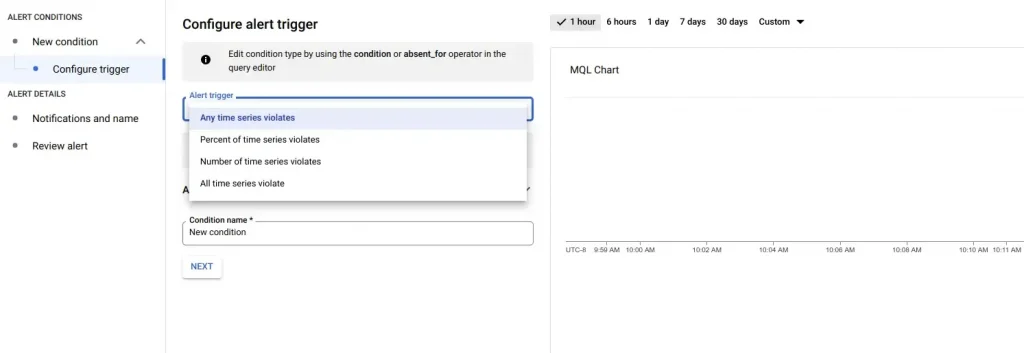

Then you can setup additional constraints for the triggering the event

Whether you want it to be triggered each time that the conditions are fulfilled or you want it to be triggered only sometimes, based on the rate (in %) or number of time series that violate the condition. To make it as close to the usual actionable events, I recommend to select the first option of “Any time series violates”.

Now, let’s have an example how it all works.

Lead by example or “Can you do it in Google Cloud” challenge

Some time ago my friend asked me if it is possible to deploy a new version of the Cloud Function and then to roll it back if the deployed version does not perform well enough. As an example, my friend said it is possible to do in Azure but it seems to him that there is no such functionality in Google Cloud. I came to the challenge because I was sure that it is “there”.

So, I ended up with creating a simple Cloud Function that returns status 200 when going to “good” path and status 404 when going to “bad” one.

package cloudfunctiondemo

import (

"fmt"

"net/http"

)

func Ping(w http.ResponseWriter, r *http.Request) {

if r.URL.Path == "/good" {

fmt.Fprint(w, "pong")

return

}

http.NotFound(w, r)

}

I deployed it to Cloud Function and then went and created an alert policy that is supposed to capture the following event and to send PubSub message each time the event takes place:

Error rate of the responses from my Cloud Function instance is beyond (greater than) 20% for a 5 minutes

In other words if less than 80% of the requests are not OK for more than 5 consecutive minutes then I want to rollback to the previous version.

So, to convert the above into MQL I needed some metrics that help me with finding the “Cloud Function response’s error rate”. I went to look what metrics Cloud Function reports on Google Cloud and found function/execution_count metric that accumulates a total number of executions of the function with the status attribute that can be “ok”, “error”, etc. So, for me to know the rate I need to know how many executions were made with status == "ok" vs. total number of all executions. Looking into MQL documentation and examples helped me to compose the following query with the condition inside:

fetch cloud_function

| metric 'cloudfunctions.googleapis.com/function/execution_count'

| {

filter status != 'ok'

;

ident

}

| group_by drop[status], sliding(5m), .sum

| ratio

| scale '%'

| every (30s)

| condition val() > 20'%'

I will explain it, so it will be easy for you to make something yourself. First operator (line 1) defines the type of the monitored resource for which we fetching the data. Second operator (line 2) queries the metric that I found should be used. Third operator is a complex one. It (line 3) is a group operator that combines a filtered result from (line 2) with the “identity” table which is essentially the whole time series of the metric. The following operators implement the ratio calculation I mentioned earlier. In this particular query it sums up tables in windows of 5 minutes and calculates the ratio of the first (one with non-OK status) vs the second (all counts). Then it converts the result into percentage (line 10) and “tells” to refresh it every 30 seconds (line 11). At the end it conditions the query for alerting in event that the ratio will be greater than 20%.

After defining the alert to be fired for “Any time series violates” and adding PubSub notification channel, I’ve got the actionable event that does not have the action. Remaining work was straightforward. I defined the CloudBuild trigger that is fired on receiving the PubSub message which I connected to the same PubSub topic to which the alert sends the notification and then I implemented the Cloud Function rollback using CloudBuild script. I don’t put the code here because it was a prove-of-concept based on the undocumented feature of the Cloud Function.

Long story short, it is not easy to implement actionable events in Google Cloud. We are working to make it simpler but it might take a time. However, there is nothing that you cannot do in Google Cloud in this regard. Reach me out of you have specific user case that you are troubled with.

The original article published on Medium.